Most recent

Fashion Forever: Discover How to Look Fabulous at Any Age

Fashion Forever: Discover How to Look Fabulous at Any Age...

In the fast-paced and ever-changing world of fashion, age has often been considered ...

Read more

Cybersecurity Attacks You Should Know to Protect Yourself...

Cybersecurity refers to the set of practices, technologies, and processes designed t...

Read more

Challenging the Speed of Sound: In the Footsteps of the Fastest A...

Since the dawn of human civilization, the desire to fly has been a fascination deepl...

Read more

Transform Your Home into a Sanctuary of Beauty: Tips for Creating...

Home is much more than just four walls and a roof. It is a refuge, a place where we ...

Read more

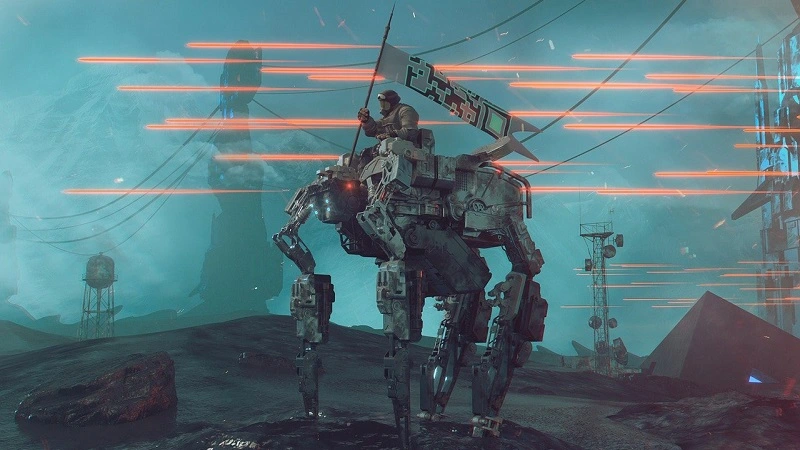

Life After Death 2.0: The Technological Revolution of 'Upload'...

In the digital era we live in, the concept of life after death has taken an unexpect...

Read more

Green and Vibrant: Discover the Most Resilient and Easy-to-Care-f...

Indoor plants have emerged as essential elements in the aesthetics and functionality...

Read more

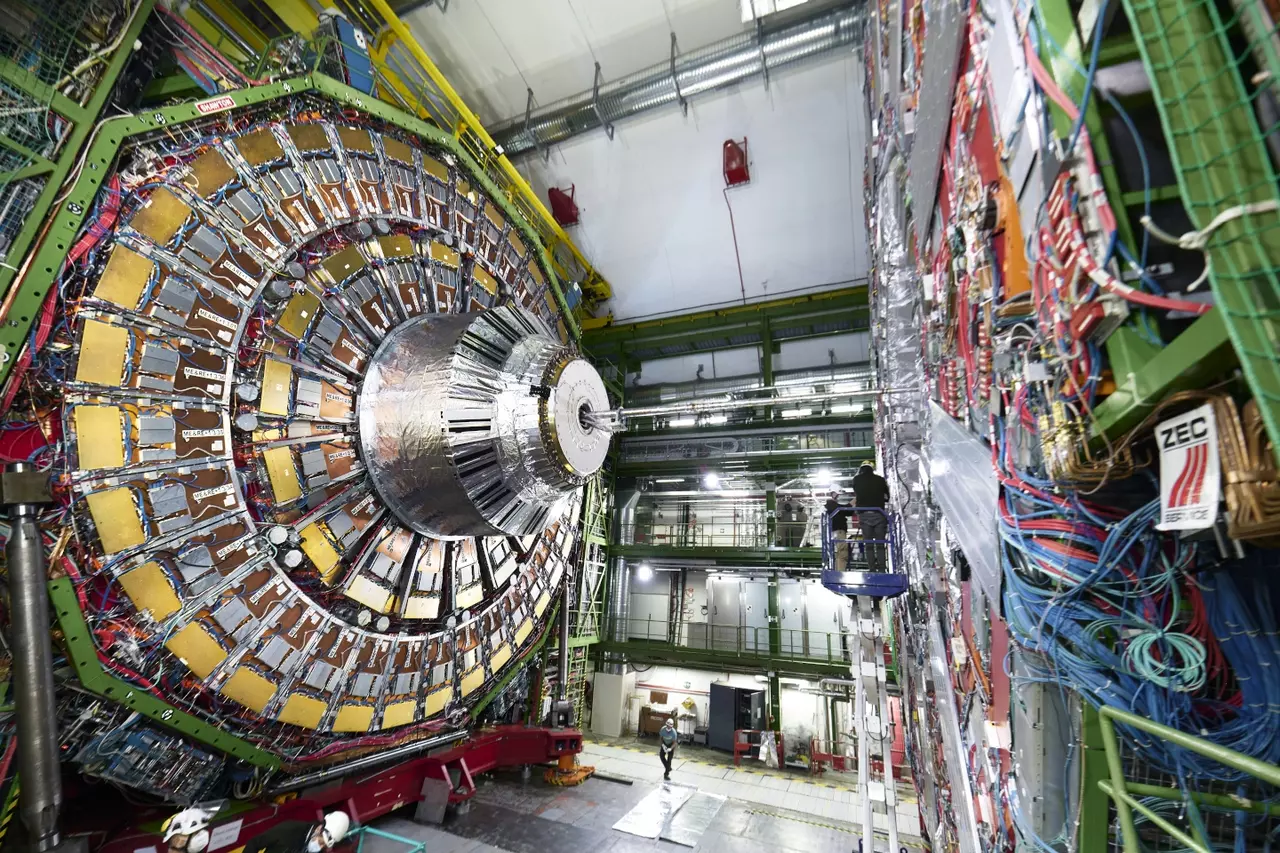

CERN's Experiments and Hidden Dimensions

From the most intimate corners of the atom to the far reaches of the cosmos, humanit...

Read more

Best Collections of Converse Sneakers You Should Know...

In the vast universe of fashion and urban culture, few brands can claim as robust a ...

Read more1

Selected for You

10 Sites to Help You Sign Documents Online

Everyone dreams of an office without paper. However, to be completely digital, you need ...

Read more

Software: How Gaming Went Mobile, and What Happens Next...

Electronic gaming has been a growing industry since the first consoles and PCs began cre...

Read more

6 YouTube channels with easy explanations Physics in Spanish...

We have a couple of compilations devoted to YouTube channels educational videos about C...

Read more

Turn your Android tablet into a retro video game machine...

Free From The Android have always been attentive to the Games that came out for Androi...

Read more

You can play the GameBoy Advance on your android wear and yes, it...

There is a small group of developers android wear that are bent on demonstrating th...

Read more

Why you should download a VPN when you are traveling...

There is a lot you need to remember while traveling, and one of them is cyber securi...

Read more

What Makes a Good Mortgage Lender in Canada?

pixabay.com A mortgage loan is an excellent solution for people who want to acquire p...

Read more

Text-Modus - Chrome-Erweiterung, mit der Sie sehen nur Text auf W...

Bisher, wenn es um die Vereinfachung der visuellen Umgebung der Webseiten, um sie noch...

Read more