Online Divorce in Virginia: A Step-by-Step Guide...

«I got a divorce, and I felt like I finally started my career. I started making movies ...

Read more

Online Divorce in Oregon: A Step-by-Step Guide...

«Marriage is not super-important to me - most end in divorce. I love the idea of being ...

Read more

Fashion Forever: Discover How to Look Fabulous at Any Age...

In the fast-paced and ever-changing world of fashion, age has often been considered ...

Read more

Cybersecurity Attacks You Should Know to Protect Yourself...

Cybersecurity refers to the set of practices, technologies, and processes designed t...

Read more

The Evolution of Women's Fashion: From Corsets to Personal Expres...

Women's fashion is a vivid reflection of cultural, social, and political evolution t...

Read more

Challenging the Speed of Sound: In the Footsteps of the Fastest A...

Since the dawn of human civilization, the desire to fly has been a fascination deepl...

Read more

Transform Your Home into a Sanctuary of Beauty: Tips for Creating...

Home is much more than just four walls and a roof. It is a refuge, a place where we ...

Read more

Life After Death 2.0: The Technological Revolution of 'Upload'...

In the digital era we live in, the concept of life after death has taken an unexpect...

Read more1

Selected for You

Less is More: The Charm of Minimalist Interior Design...

Minimalist interior design has gained popularity in recent decades due to its focus ...

Read more

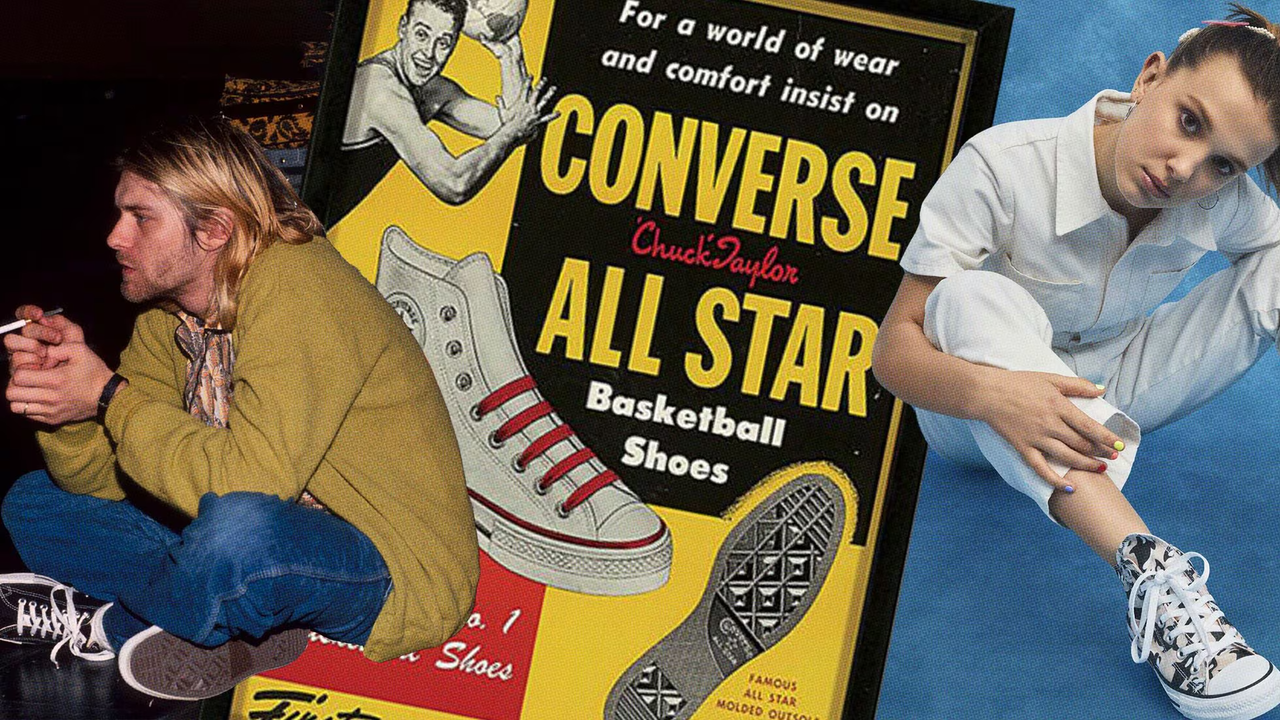

Best Collections of Converse Sneakers You Should Know...

In the vast universe of fashion and urban culture, few brands can claim as robust a ...

Read more

Fashion that Inspires: Brands that Transform Messages into Empowe...

Fashion, beyond being a mere aesthetic expression, has become a powerful tool to emp...

Read more

Origin and History of Barbie

Introduction Barbie doll is one of the most iconic and recognizable dolls in the ...

Read more

Cutting-edge in the 21st Century: Contemporary Art Movements...

Contemporary art of the 21st century presents itself as a vast and diverse creative ...

Read more

Jacket 2.0: The Technological Garment That Is Setting Trends...

In a world where technology continually blurs the lines between the ordinary and the...

Read more

Resonant Style: Deciphering the Secrets of Urban Fashion...

Urban fashion, commonly known as street style or streetwear, is a dynamic and ever-e...

Read more

Elevate Your Style: Discover Must-Have Fashion Accessories...

Fashion, more than a mere choice of clothing, has evolved into a form of expression ...

Read more